Please note this is a DRAFT and may change throughout the day (1 June 2011)

On June 17 I will be joining other researchers at a Patent Data Workshop jointly hosted by the USPTO and NSF at the U.S. Patent & Trademark Office in Alexandria, VA. This workshop, supported by the USPTO Office of Chief Economist and the Science of Science and Innovation Policy Program (SciSIP) at the NSF, will bring researchers together to share their ideas on how to facilitate the more efficient use of patent and trademark data, and ultimately to improve both the quantity and caliber of innovation policy scholarship.

On June 17 I will be joining other researchers at a Patent Data Workshop jointly hosted by the USPTO and NSF at the U.S. Patent & Trademark Office in Alexandria, VA. This workshop, supported by the USPTO Office of Chief Economist and the Science of Science and Innovation Policy Program (SciSIP) at the NSF, will bring researchers together to share their ideas on how to facilitate the more efficient use of patent and trademark data, and ultimately to improve both the quantity and caliber of innovation policy scholarship.

The stated goals of this workshop include:

- Creating an information exchange infrastructure for both the production and informed evaluation of transparent, high-quality research into innovation;

- Promoting an intellectual environment particularly hospitable to high-impact quantitative studies;

- Creating a distinct community with well-developed research norms and cumulative influence; and

- Championing the development of a platform to support a robust body of empirical research into the economic and social consequences of innovation.

Each participant planning to attend this workshop has been asked to prepare a blog post that outlines (a) our understanding of the most significant theoretical or empirical challenges in this space, and/or (b) where the frontier of knowledge is, what innovative things are being done at the frontier — or within reach of being done to solve the set of problems — and where targeted funding could yield the highest payoffs in getting to solutions. The purpose of this post is to offer some of my thoughts based on progress made by linked open government data initiatives in the US and around the world.

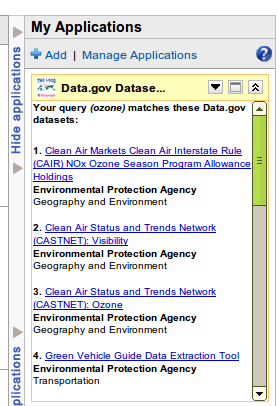

Background: The Tetherless World and Linked Open Government Data

Since early 2010 the Tetherless World Constellation (TWC) at Rensselaer Polytechnic Institute has collaborated with the White House Data.gov team to make thousands of open government datasets more accessible for consumption by web-based applications and services, including mashups leveraging Semantic Web technologies. TWC has created an infrastructure, embodied by the TWC LOGD Portal, for automatically converting to RDF and enhancing government data published in tabular (e.g. CSV) format; publishing these converted datasets as downloadable “dump files” and through SPARQL endpoints; demonstrating highly effective methodologies for using such linked open government data assets as the basis for the agile creation of lightweight, powerful visualizations and other mashups. In addition to providing a searchable interface to thousands of converted Data.gov datasets, the TWC LOGD Portal publishes a growing set of demos and tutorials for use by the LOGD community.

Since early 2010 the Tetherless World Constellation (TWC) at Rensselaer Polytechnic Institute has collaborated with the White House Data.gov team to make thousands of open government datasets more accessible for consumption by web-based applications and services, including mashups leveraging Semantic Web technologies. TWC has created an infrastructure, embodied by the TWC LOGD Portal, for automatically converting to RDF and enhancing government data published in tabular (e.g. CSV) format; publishing these converted datasets as downloadable “dump files” and through SPARQL endpoints; demonstrating highly effective methodologies for using such linked open government data assets as the basis for the agile creation of lightweight, powerful visualizations and other mashups. In addition to providing a searchable interface to thousands of converted Data.gov datasets, the TWC LOGD Portal publishes a growing set of demos and tutorials for use by the LOGD community.

The Data.gov/TWC LOGD partnership and similar international LOGD efforts, especially the UK’s Data.gov.uk initiative, have demonstrated the value and potential for innovation achieved by exposing government data using linked data principles. Indeed, the effective application of the linked data approach to a multitude of data sharing and integration challenges in commerce, industry and eScience has shown its promise as a basis for a more efficient, agile research information exchange infrastructure.

The Data.gov/TWC LOGD partnership and similar international LOGD efforts, especially the UK’s Data.gov.uk initiative, have demonstrated the value and potential for innovation achieved by exposing government data using linked data principles. Indeed, the effective application of the linked data approach to a multitude of data sharing and integration challenges in commerce, industry and eScience has shown its promise as a basis for a more efficient, agile research information exchange infrastructure.

Recommendation: Create a “DBPedia” for Patent Data

The Linked Open Data Cloud diagram famously illustrates the growing number of providers of linked open data around the world. Careful examination of the LOD Cloud shows that most sources are sparsely linked, and a very few — most notably, DBPedia.org, are extremely heavily linked. The reason is that the Web of Data has increasingly adopted DBPedia as a reliable source or hub for canonical entity URIs. This means that as providers put their datasets online, they enhance their datasets by providing sameAs links to DBPedia URIs for named entities within these datasets. This enables their datasets to be easily linked to other datasets and increases their utility and value as the basis for visualizations and linked data mashups.

The Linked Open Data Cloud diagram famously illustrates the growing number of providers of linked open data around the world. Careful examination of the LOD Cloud shows that most sources are sparsely linked, and a very few — most notably, DBPedia.org, are extremely heavily linked. The reason is that the Web of Data has increasingly adopted DBPedia as a reliable source or hub for canonical entity URIs. This means that as providers put their datasets online, they enhance their datasets by providing sameAs links to DBPedia URIs for named entities within these datasets. This enables their datasets to be easily linked to other datasets and increases their utility and value as the basis for visualizations and linked data mashups.

Providers embrace DBPedia’s URI conventions as “canonical” in order to make their datasets more easily adopted. Our objective with patent and trademark reference data and research information in general must be to break down barriers to its widespread use, recognizing that we may have no idea how it may be used. Linked data principles and the Web of Data emerging from them have re-written what it means to make data integration easy. Whereas even a few short years ago it was useful to simply provide a searchable patent database through a proprietary UI, next-generation innovation infrastructures will be based on globally interlinked graphs drive by concept and descriptive metadata extracted from patent records, research publications, business publications and indeed data from social networks. Scholars of innovation will traverse these graphs and mash them with other graphs in ways we cannot anticipate, and thus make serendipitous discoveries about the process of innovation we cannot predict today.

My DBPedia reference comes from the idea of identifying concepts and specific manifestations of innovation in the patent corpus. Consider an arbitrary patent disclosure; it can be represented as a graph of concepts and related manifestations. The infrastructure I’m proposing will enable the interlinking of URI-named concepts, not only with other patent records but also scientific literature, the financial and news media, social networks, etc. From a research standpoint, this will enable the study of the emergence, spread and influence on innovation in many dimensions.

Conclusions

The USPTO has already made great strides in improving access to and understanding of patent and trademark data; an excellent example is the Data Visualization Center and specific data visualization tools such as the Patent Dashboard which provides graphic summaries of USPTO activities. These are “canned apps,” however; the next generation of open government will require finer grained access to this data, presented as enhanced linked data and using open licensing principles. As USPTO datasets are presented in this way, researchers will be able to interlink this data with datasets from other sources, resulting in a more effective study of the causes of innovation and indeed the outcomes of government programs intended to stimulate innovation.

References

- NSF Patent Data Workshop. NSF Award Abstract #1102468 (31 Jan 2011).

- Julia Lane, The Science of Science and Innovation Policy (SciSIP) Program at the US National Science Foundation. OST Bridges vol. 22 (July 2009)

The

The